You Won’t Believe These 9 Real World Artificial Intelligence Applications in 2022

Artificial intelligence is one of the most exciting and rapidly-growing fields in computer program technology today. But what exactly is it? Simply put, artificial intelligence is a technology that allows machines to learn and perform tasks that usually require human intelligence, such as a human-like understanding of natural language and recognizing objects.

At its core, artificial intelligence (AI) is the ability of a machine or computer program to think and learn. It enables devices to analyze data more quickly and accurately than humans can, allowing them to make decisions based on patterns and insights gleaned from that data. AI technology has been used in many areas, including robotics, natural language processing, facial recognition, video gaming, and autonomous vehicles.

Computer scientist John McCarthy first coined the term “artificial intelligence” in 1956 at the Dartmouth Conference. It describes any technique or tool that mimics human cognitive abilities, such as problem-solving, pattern recognition, or learning from experience. AI algorithms can be divided into supervised learning and unsupervised learning.

Role of big data in AI

Big data plays an important role in the development of artificial intelligence. Big data provides AI systems with large amounts of structured and unstructured data to learn from, allowing them to build more accurate models to identify patterns and make predictions.

What are the four types of AI?

four main types of Artificial Intelligence (AI) are narrow, general, strong, and super.

Narrow AI is the most commonly encountered type of artificial intelligence today. It consists of systems specifically designed to solve a single task or problem, such as facial recognition or playing chess. This type of AI is limited in scope but can still provide valuable insights into a specific issue.

General AI is artificial intelligence featured in movies and books such as “The Terminator.” It can perform various tasks without being specifically programmed to do so. General AI requires advanced machine learning algorithms and technologies, making it much more challenging to build and use than narrow AI.

Strong AI is a type of artificial intelligence that can perform human-level cognitive tasks such as problem-solving, reasoning, decision-making, and creativity. However, this type of AI requires more machine learning algorithms and technologies, making it even more complex to build and utilize than general AI.

Finally, super AI is an artificial intelligence that can exceed even the most advanced human intelligence capabilities. This type of AI is still in its infancy and has yet to be developed or implemented on a large scale.

AI in business

Today, AI technology is being used in a variety of ways. For example, businesses are using AI to understand their customers better, optimize pricing and automate customer service. Additionally, healthcare professionals leverage technology to diagnose diseases and develop personalized patient treatments. AI is also being used to improve Cybersecurity, manage transportation networks and create more efficient energy systems.

Looking ahead, artificial intelligence is likely to become even more integral in our lives as its uses continue to expand into new fields. We expect to see it utilized in education, finance, agriculture, and many other industries shortly. This increased usage will result in a greater need for responsible AI development that considers ethical implications and protects users’ privacy and security.

This blog post will look at nine real-world applications of artificial intelligence that are already making a difference in our lives! But before we get started, we will explore the Machine learning world a bit more and introduce some common terms and terminology so you can read and easily learn from the rest of this article.

Machine learning

Machine learning is a subset of artificial intelligence that focuses on enabling machines to Machine learning is a process that allows computers to learn from data without being explicitly programmed. Instead, this is done through trial and error, and the computer “learns” by adjusting its algorithms. Machine learning has become increasingly important in recent years as businesses use it for product recommendations, fraud detection, and spam filtering tasks.

How does machine learning work

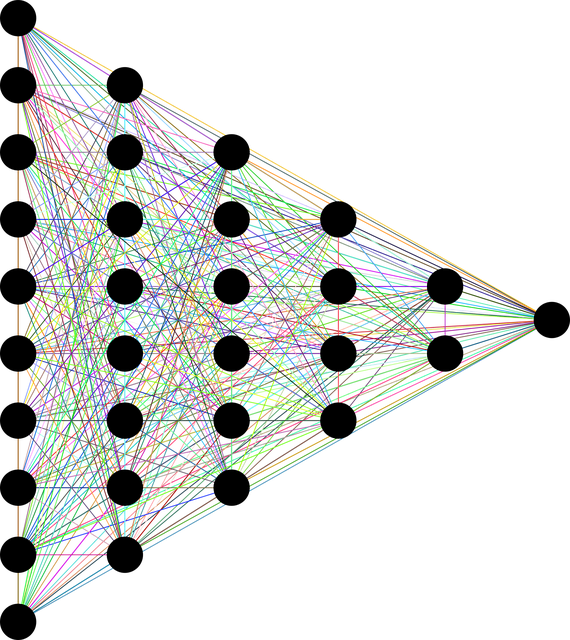

At its core, machine learning is the process of analyzing data to make predictions or decisions. This is done by creating algorithms designed to detect data patterns that can be used for making predictions or decisions. These algorithms analyze various factors such as input variables, output results, and potential outcomes associated with each variable.

The algorithms use statistical techniques such as linear regression, decision trees, and neural networks to identify patterns in the data and build models to understand it better. Once these models are created, they can be used to make predictions or decisions. For example, suppose a company wants to predict how many customers will buy a product based on past sales data. In that case, they could use machine learning to help determine which factors are most likely to result in the highest sales.

What are supervised and unsupervised learning?

Supervised learning is a type of machine learning in which data points are labeled with the desired output. This allows the model to learn from existing data and make predictions based on new input. Everyday supervised learning tasks include classification, regression, and recommendation systems.

Unsupervised Learning is a type of machine learning that does not require labeled data. Instead, the algorithm is given a dataset and must identify patterns and relationships in the data without any external guidance. Common unsupervised learning tasks include clustering, anomaly detection, and image recognition.

Classification and regression

The main difference between classification and regression is that, in classification tasks, the output is a discrete value, whereas, with regression tasks, the result is a constant value.

Classification problems involve mapping input data to predetermined labels or classes. This problem requires an algorithm to learn from existing datasets to make accurate predictions about new data points. In contrast, regression problems involve predicting the value of a continuous variable based on existing datasets.

Overall, classification and regression are two distinct types of machine-learning tasks that can be used to solve different kinds of problems. Classification is best suited for situations with a limited number of possible outcomes, whereas regression is best suited for cases where the desired output is a constant value. Therefore, it is essential to understand the difference between these two types of machine-learning tasks to choose the correct algorithm for your specific problem. All in all, both classification and regression are essential tools to have in any aspiring machine learning engineer’s toolbox!

Training a machine learning algorithm

Once the algorithms and models are created, they must be trained to make predictions or decisions accurately. Training a machine learning model involves feeding it with data and adjusting its parameters until it produces the most accurate results possible. This process can take days or even weeks, depending on the complexity of the task, though modern techniques such as deep learning can help speed up this process significantly. Once the model is trained, it can be used for making predictions or decisions in an automated fashion. Below are a few of the most common algorithms to train a machine-learning model

Linear Regression

Linear regression is a machine learning algorithm used to model relationships between variables. This type of algorithm works by creating a linear model to fit the data and using this model to make predictions about future values.

The process of fitting the data with a linear model involves determining the best coefficients for each variable in the equation so that it can accurately represent the underlying relationship between those variables. Once these coefficients have been identified, they calculate an output value based on any given input values.

Linear regression is one of the most commonly used algorithms in machine learning due to its simplicity and effectiveness. For example, it is beneficial when trying to predict continuous values such as sales figures or stock prices. Additionally, linear regression models can uncover critical insights about the underlying data, such as correlations between different variables. All in all, linear regression is a practical and versatile predictive modeling and analysis tool.

Decision Tree

A decision tree is a popular machine-learning algorithm for classification and regression tasks. This algorithm makes predictions or decisions based on a series of questions or criteria that can be used to split the data into different categories.

The decision tree algorithm begins by selecting the “best” attribute from the dataset, which can then be used as a starting point. Next, the best quality is determined by evaluating each feature in terms of its ability to accurately classify cases within the dataset. Finally, other attributes are evaluated using similar criteria until all possible outcomes have been identified and leaf nodes are assigned in the decision tree.

Overall, decision trees are a practical and versatile predictive modeling and analysis tool. They are handy for complex input data problems and require quick, accurate decisions. Additionally, decision trees are highly interpretable, making them easy to understand and explain. This can help make creating predictive models much less daunting for beginners in machine learning. All in all, the decision tree algorithm is a powerful tool for any aspiring machine learning engineer.

Random Forest

Random forest is an ensemble machine learning algorithm that can be used to make predictions and decisions. In essence, it works by creating multiple models, or “forests,” and combining the results of each model to create a single output. Each tree in the random forest is generated using a random set of data points, which makes it difficult for any one tree to overfit the data.

When predicting an outcome with a random forest, each decision tree creates its prediction based on its data points. The final result is determined by majority voting – whichever prediction has more “votes” from the decision trees will be chosen as the best result. This makes the random forest algorithm exceptionally reliable, as no single model can affect the results.

A random forest algorithm is an effective tool for classification and regression tasks, as it can quickly handle a wide variety of data types. Additionally, because the output from each tree is combined, it is less prone to overfitting than other algorithms, such as decision trees or neural networks. This makes the random forest algorithm a great choice when trying to make accurate predictions without sacrificing any interpretability. All in all, the random forest algorithm is a powerful machine learning tool that can be used to quickly and accurately generate predictive models and decisions.

Do Machine learning models get better over time?

The machine learning process is iterative, which can continuously improve over time as more data is collected and analyzed. This allows companies to refine their models and make better predictions or decisions based on the new information. In addition, as the algorithms become more precise and accurate, they can automate specific tasks, such as providing personalized product recommendations or identifying potential fraud cases.

In conclusion, machine learning is a powerful tool for analyzing data and making predictions or decisions without explicit programming. By leveraging statistical techniques such as linear regression, decision trees, and neural networks, machines can learn from data and make better decisions faster to return results.

Difference between machine learning and deep learning

The main difference between machine learning and deep learning is that machine learning algorithms are used for supervised or unsupervised tasks. In contrast, deep learning algorithms are used for complex tasks such as computer vision and speech recognition.

Machine Learning algorithms use statistical techniques to make predictions from existing data. The most common types of Machine Learning techniques include linear regression, decision trees, random forests, and neural networks. These algorithms can be used for various tasks, such as predicting stock prices or classifying images.

Deep learning

Deep Learning is a subset of Machine Learning which uses more complex models and techniques to solve difficult problems such as image recognition or natural language processing. Deep learning algorithms usually employ artificial neural networks, interconnected networks that can learn from data by performing complex calculations. By analyzing vast amounts of data and using complex mathematical models, Deep Learning algorithms can make more accurate predictions than traditional Machine Learning algorithms.

1. Natural Language Processing (NLP)

NLP is a process that helps computers understand human language. This is done by understanding the structure and meaning of sentences and the relationships between words. NLP can be used for various purposes, including sentiment analysis, automatic machine translation, and voice recognition.

NLP use cases

NLP is being used in various industries to create more efficient and accurate results. For example, it is being used by customer service departments to understand user feedback better and provide improved customer experiences. In the finance industry, NLP is helping companies better recognize and analyze financial data for fraud detection and risk management. In health care, NLP is being utilized to aid in diagnosis and treatment planning. Additionally, machine translation services are using NLP to improve translations of documents for those who may not speak the original language.

there are also many examples of devices that use NLP to operate. Amazon’s Alexa and Google Home are great examples of massively successful devices effectively utilize NLP.

Challenges ahead of NLP

There are still several challenges that need to be addressed with NLP technology. One challenge has been how difficult it can be to accurately interpret complex human language into structured data sets that are useful for analysis or machine learning. Additionally, the data needed to train NLP systems can be challenging to collect and may contain bias. Researchers are developing new techniques to address these challenges, such as deep learning and reinforcement learning, that can better identify patterns in natural language data sets.

Natural Language Processing plays an essential role in transforming businesses and industries worldwide. It is being used for various purposes, from customer service to fraud detection and more. While there are still some challenges to address with this technology, researchers continue to make progress toward making NLP even more accurate and robust. With further developments, it could soon become an integral part of any successful business or industry.

2. Speech Recognition:

Have you ever wondered how your phone can understand what you’re saying? How about those voice commands on your computer? Speech recognition is a technology that allows devices to interpret human speech. This can be done in several ways, but the basic idea is that the device takes in sound waves and converts them into text or actions.

Speech Recognition approaches and methods

The first method used for speech recognition is based on the acoustic model. This involves capturing sound waves and analyzing them. The device will take in a person’s voice, break it down into small parts, and compare it to known patterns of words or phrases. If there is a match, the device can recognize what was said. This system works best with clear audio recordings and can be used for tasks like automated customer service systems or voice-activated devices.

Another approach to speech recognition is based on artificial intelligence (AI). Instead of relying solely on audio recordings, AI systems use sophisticated algorithms that learn from data sets and recognize spoken words. As a result, these systems can identify nuances in language and understand the context better than acoustic models. AI-based methods are used in products like Alexa, Siri, and Google Assistant.

Biometric recognition

The third method of speech recognition is based on biometric recognition. This involves analyzing facial features and other biological signals to identify a person’s vocal patterns. Biometric systems are often used for authentication and can be found in devices that require a particular user to access them.

No matter which system is used, all speech recognition technologies depend on the same basic principle: capturing sound waves and turning them into actionable information. However, with advances in artificial intelligence and machine learning, these systems are becoming even more accurate at understanding what people say! So next time you use voice commands or automated customer service software, remember how amazing your device can understand you. This is the power of speech recognition!

3. Image recognition

The human brain is a fantastic thing. It can recognize faces in a crowd, remember childhood memories, and process vast amounts of everyday life information or new data. But even the human brain has its limits. That’s where artificial intelligence comes in. AI can help us do things that are impossible for humans, including recognizing images with incredible accuracy.

AI-driven image recognition technology has allowed us to identify objects in pictures much faster and more accurately than ever before. This technology is powered by deep learning, a form of AI that uses large datasets and algorithms to interpret images. The resulting models can recognize complex patterns and detect subtle differences between images with remarkable accuracy. Businesses, from retail stores to banks, are now using this technology to analyze, sort quickly, and identify images such as products or customers. For example, a bank could use AI-driven image recognition technology to detect fraudulent activity in credit card transactions by recognizing patterns in customer photos.

Benefits and use cases of AI-driven image recognition

The benefits of AI-driven image recognition extend beyond businesses, however. Consumers are also reaping the rewards of this intelligent technology with more personalized recommendations based on their behavior and interests. For example, companies like Amazon have applied AI-driven image recognition to improve their product search capabilities, making it easier for customers to find what they need in seconds.

AI-driven image recognition is also being used in the healthcare industry, where it can be used to detect tumors and other medical conditions from images. This technology can save lives by helping medical professionals diagnose and treat patients more quickly and accurately.

Artificial intelligence transformation of the business world

AI-driven image recognition science is transforming how businesses and consumers interact with images. From faster product search capabilities to better fraud detection, this technology brings a world of new possibilities that weren’t available just a few years ago. With its vast potential for streamlining processes, improving accuracy, and saving lives, it’s no surprise that AI-driven image recognition is quickly becoming an essential part of our everyday lives.

4. Robotics

Robotics uses robots for manufacturing, assembly line operations, and other industrial applications. Artificial intelligence is used in robotics to enable robots to understand instructions and respond accordingly, making them more efficient and flexible than ever before.

Robots have been a staple of science fiction tales for decades, but they are now becoming a reality. While they are often portrayed as cold and emotionless machines, the truth is that they are becoming more and more sophisticated due to advances in machine learning and artificial intelligence.

As stated, machine learning is a form of artificial intelligence that enables computers to learn from data without being explicitly programmed. By using algorithms, machines can identify patterns in large datasets and use them to make predictions or take specific actions. In the context of robotics, this technology can allow robots to “learn” how to perform particular tasks more quickly and efficiently. For instance, robots programmed to assemble products can be taught how to do it faster and more accurately using machine learning algorithms.

How Artificial intelligence ai and robotics come together

Artificial intelligence, on the other hand, goes beyond just learning from data. Instead, AI enables robots to make decisions based on what they “know,” allowing them to interact with their environment in ways that would not have been possible. Robots can now recognize objects, respond to voice commands, and even execute complex tasks such as playing chess or completing household chores like folding laundry. The applications for artificial intelligence are truly limitless, and this technology is helping robots become more capable than ever before.

In combination with machine learning, artificial intelligence is transforming the capabilities of robots today. By learning from data and making decisions on their own, robots can complete tasks that would have been too difficult for them to do before. Whether it’s helping businesses manufacture products more efficiently or providing assistance in our homes, the possibilities are endless regarding what robots can do with these advancements.

The roadmap and the future of ai driven robotics

We are still in the early stages of machine learning and artificial intelligence technology. Still, there is no doubt that they will continue to evolve and become even more potent over time. As a result, robots will only become more intelligent and capable in the years ahead as they benefit from these technologies. With so much potential on the horizon, it’s exciting to think about all the applications that robots could use in the future.

Overall, machine learning and artificial intelligence are having a profound effect on the capabilities of robots. By learning from data and making decisions independently, these technologies allow robots to become increasingly sophisticated and valuable for businesses and consumers. As these technologies continue to evolve, robots will likely become more intelligent and even more capable in the years to come. It’s an exciting time for robotics, and we can’t wait to see what the future holds.

5. Automated customer service

Automated customer service systems leverage artificial intelligence technology to answer customer queries quickly and accurately without needing manual input from a human operator. This technology has enabled businesses to offer faster support services with fewer resources by reducing the need for customer service representatives.

6. Virtual personal assistants

Virtual personal assistants (VPAs) are becoming increasingly popular as people become increasingly busy. These assistants use artificial intelligence to help you schedule appointments, send reminders, and manage your calendar. However, VPAs can be even more efficient if they use artificial intelligence to learn about your preferences and habits.

How Virtual personal assistants Work

AI allows VPAs to learn from user data and become more personalized. By analyzing the user’s behavior, AI instead n better understand their preferences, habits, and needs. This allows the VPA to provide tailored suggestions and proactive notifications about upcoming events or tasks. For example, if users regularly schedule meetings every Monday at 9 am, their VPA can automatically remind them to do so. AI can also help VPAs anticipate user needs before they even need to ask – for example, if you’re running late for a meeting, your VPA can offer alternative routes or suggest an earlier time to arrive.

AI-powered VPAs are also capable of understanding natural language and providing personalized results. By analyzing speech patterns and the context of conversations, AI can accurately interpret what users say and provide the most relevant information based on that conversation. This means that users can ask more complex questions and get better answers.

AI makes virtual personal assistants much more efficient by allowing them to understand users better, interpret natural language commands, and become more intelligent over time. With AI-powered VPAs, users can get highly customized and tailored recommendations and proactive notifications that make life easier. As AI continues to evolve and improve the capabilities of VPAs, we will continue to see more intelligent assistants in our daily lives!

7. Automated Intelligence and Autonomous vehicles

Self-driving cars are all the rage these days, and for a good reason – they have the potential to make learning from data and doing work. How do these vehicles sense their surroundings and decide where to go and what to avoid?

How Autonomous vehicles work

Autonomous vehicles are equipped with various sensors, such as radar, cameras, and lidar. These sensors detect nearby objects and obstacles in the vehicle’s path. This data is then used to create a 3D map of the vehicle’s surroundings, which it uses to plan its route.

The autonomous car also uses artificial intelligence (AI) algorithms which work on massive cloud platforms to understand its environment and decide where it should go. AI systems can be pre-programmed with specific rules for navigating traffic and avoiding hazards; They can also learn from experience by processing data from past journeys and making adjustments based on what worked best in similar situations.

How autonomous cars avoid collisions

In order for autonomous cars to avoid collisions, they need to be able to identify and respond to other vehicles, pedestrians, and obstacles in their environment. To do this, autonomous cars use computer vision technology to process images from cameras mounted on the vehicle. This allows them to identify objects (such as traffic lights, street signs, or pedestrians) and recognize patterns in their environment.

Once the autonomous vehicle has identified its surroundings, it needs to make decisions about navigating around them safely. To do this, it uses algorithms that weigh different factors, such as speed limits, road conditions, and traffic flow, to determine the safest route for the car.

Finally, once a route is determined and set into motion by the AI system, the self-driving car needs a way of controlling itself while following that path. It uses advanced control algorithms considering the car’s speed, acceleration, and braking. This allows the car to make minor adjustments to maintain a safe distance from other vehicles and obstacles while following its route.

Challenges ahead of the autonomous cars industry

Though autonomous cars are making remarkable progress in terms of safety and efficiency, some challenges must be overcome before they can become commonplace on our roads. For example, some environments may contain objects or conditions difficult for an autonomous vehicle to detect or interpret correctly – such as foggy weather, potholes, or construction zones. Additionally, ethical considerations will need to be considered when creating AI systems that decide where and how fast a car should go.

Overall, autonomous cars are a complex and fascinating technology that has the potential to revolutionize the way we travel. With their ability to sense, comprehend and respond to their environment, they are quickly becoming an integral part of our roads – ushering in a world of safer and more efficient transportation.

8. Artificial Intelligence AI in Cybersecurity

AI is increasingly being used to detect and block cyber threats. For example, AI-powered solutions can quickly scan many networks or devices and quickly identify suspicious activity or malicious code. AI can also detect abnormal user behavior, such as logins from unusual locations or a sudden surge in data traffic. This helps organizations prevent unauthorized access to sensitive information.

Benefits and Challenges of AI technology for Cybersecurity

Despite the many benefits AI offers, some challenges are still associated with its use. One of the main issues is that AI-based solutions require significant amounts of data to be effective. With. Some of the algorithms that power these systems cannot accurately detect threats. Additionally, AI systems can often be fooled by malicious actors who know how to manipulate them or exploit their vulnerabilities.

Another benefit of AI in Cybersecurity is its ability to automate specific security tasks. Automating mundane tasks, such as patching systems and monitoring logs, frees up IT staff for more meaningful work. Automation also makes it easier for organizations to respond quickly to potential threats as they are identified in real time.

Overall, Artificial Intelligence is transforming Cybersecurity significantly and has already proven to be an invaluable tool for protecting businesses from cyber threats. While it still presents its own unique set of challenges, AI provides organizations with increased levels of security and greater efficiency in managing their networks and responding to potential threats. With more development and testing, AI-based solutions will continue to prove their worth in the fight against cybercrime.

9. Medical diagnostics

Medical diagnostics is an industry that is ripe for transformation by artificial intelligence. AI has already been used to significant effect in this area, and the trend will continue.

One of the primary uses of AI in medical diagnostics is to aid in detecting and diagnosing diseases. AI-powered technologies can identify patient data patterns that might indicate a specific illness or disorder, enabling physicians to make earlier diagnoses. For example, IBM Watson has been used to help detect Alzheimer’s Disease by analyzing brain scans and other data. AI can also detect cancer, with researchers at Stanford University using deep learning algorithms to identify skin cancer from images of lesions.

AI is also being used to help physicians make more informed treatment decisions. By analyzing patient data, AI systems can recommend treatments that are likely to work best for a particular patient’s condition. For example, Google DeepMind Health is working on an AI system that helps doctors select the most effective drugs for treating sepsis in intensive care units.

Robotic Surgery

Another potential application of AI in medical diagnostics is robotic surgery. Robotic surgical robots have been developed that can perform precise movements with less risk than traditional open surgeries. These robots use AI-powered computer vision systems to identify and target tissue, allowing for greater accuracy and precision.

Finally, AI can be used in medical diagnostics to automate specific tasks. For example, AI-powered algorithms can analyze patient data and generate diagnostic reports that are more accurate than those caused by humans. By automating these processes, physicians can spend less time on paperwork and more time focusing on patient care.

Artificial intelligence is transforming the medical diagnostics industry in a big way. Doctors can diagnose diseases earlier through AI-powered technologies and make better treatment decisions. Furthermore, robots are increasingly capable of performing complex surgical operations more accurately than ever. As AI becomes more deeply embedded into healthcare systems worldwide, the potential for improved patient outcomes is immense.

What about ethics and privacy?

The implications of AI in medical diagnostics are undoubtedly exciting. However, it is essential to remember that this technology can also bring with it ethical and privacy concerns. To ensure that AI-powered technologies are used responsibly, healthcare providers must be vigilant about protecting patient data and monitoring how these systems are used. With these considerations in mind, there is no doubt that AI will continue to revolutionize the medical diagnostics industry in the years to come.

Conclusion and Wrap up

These nine real-world applications of artificial intelligence show just how versatile and powerful this technology can be. From automated customer service to autonomous vehicles, AI is revolutionizing how we live, work, and play. With more advancements in the field expected shortly, there’s no telling what other incredible applications of AI we may see.

So if you’re interested in seeing just how far artificial intelligence has come, check out these nine real-world applications for a glimpse into the possibilities! Who knows what else AI will be able to do in the future? The possibilities are truly endless.